Key Takeaways

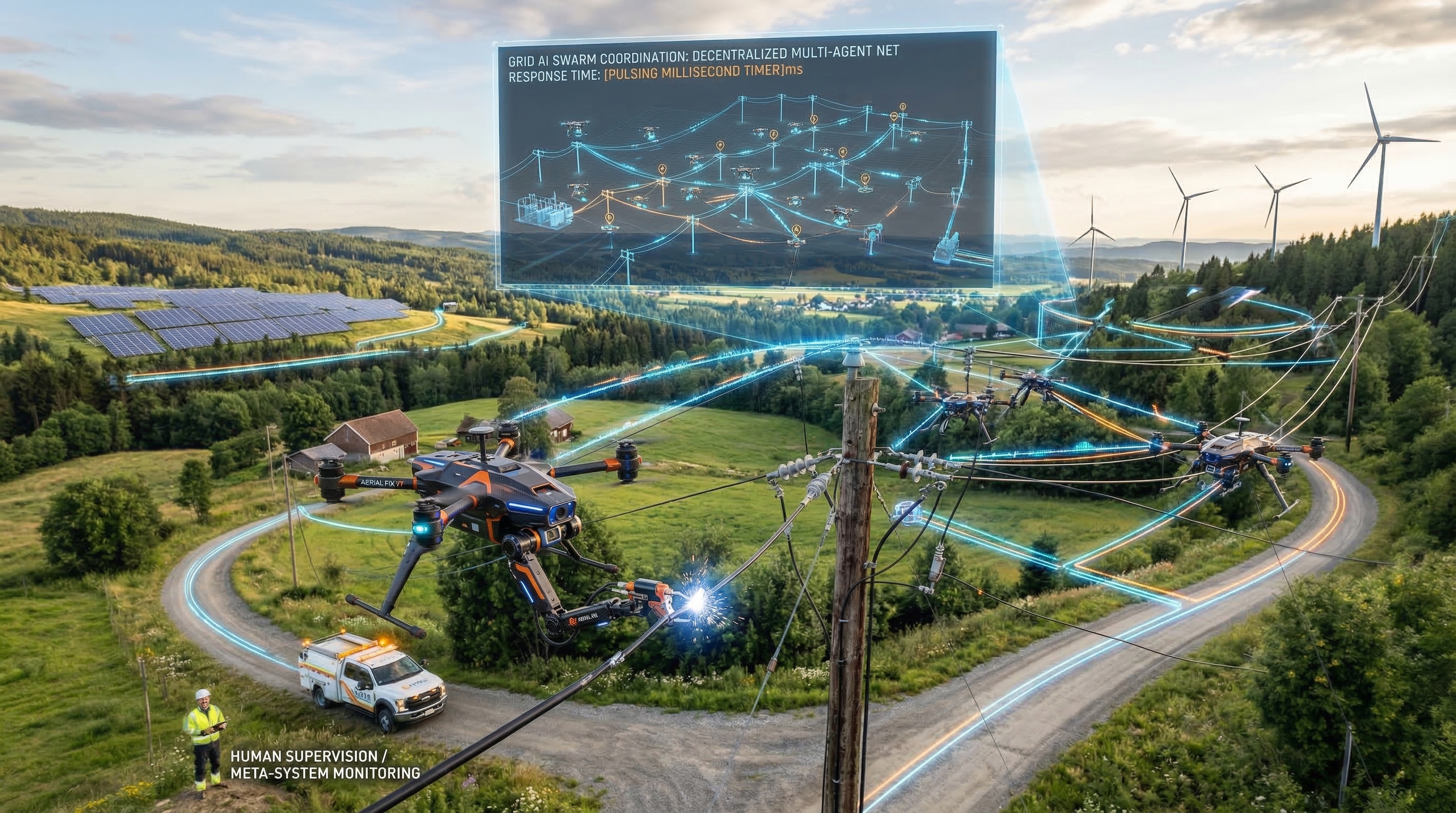

- Agentic AI systems autonomously plan, act, observe, and iterate—unlike chatbots, which respond passively to prompts.

- Auto-research agents already run code, modify systems, and iterate overnight at leading AI-native firms like Anduril and Scale AI.

- Engineering, product, and security teams benefit most—but most public deployments are hype-driven "AI-washing" with minimal actual autonomy.

- Regulatory frameworks (U.S. AI Executive Order, GSA contractor rules by 2026) now mandate audit trails and human oversight for agent decisions.

- You can start today: pick one workflow, bootstrap with Claude API or open-source frameworks, and measure ROI over four weeks.

What's an Agentic AI? (And Why It's Not Your Chatbot)

Agentic AI systems autonomously plan and execute multi-step workflows without constant human input—they observe their environment, decide what to do next, and loop until goals are met.

Picture this: late Tuesday night, an AI agent spins up a testing harness, runs an experiment on your codebase, identifies a performance bottleneck in a critical service, modifies the code, runs the test suite twice to validate the fix, and by Wednesday morning drops a pull request with a summary of findings. No human pressed a button between "start" and "done." This is now standard practice at firms building AI infrastructure—not science fiction, not marketing copy, but operational reality.

The gap between a chatbot and an agentic system is philosophical and economic. A chatbot (like ChatGPT in a browser) waits for input, generates output, and stops. An agentic system decides what step comes next, takes it, checks the result, recalibrates, and repeats—usually without waiting for human permission. This shift from "advisor" to "employee" is rewriting enterprise workflows silently and unevenly across departments.

Andrej Karpathy, who led Tesla's AI efforts and now advises on AI systems architecture, has published research on agent evaluation loops that formalize this distinction: observe → plan → act → observe (repeat). Major AI vendors—Anthropic, OpenAI, and NVIDIA—have adopted this framework as the canonical model for autonomous systems. It's become the north star for how enterprises now architect their AI operations infrastructure.

What Is Auto-Research, and Why Does It Matter?

Auto-research is a closed-loop agent process where systems independently read sources, run experiments, synthesize findings, and report results with minimal human bottlenecking between steps.

Auto-research workflows compress weeks of manual research into hours of autonomous iteration. An agent ingests a research question, retrieves papers from your vector database, extracts methodology and findings, runs a simulation against your data, compares results to published benchmarks, and drafts a technical summary with confidence intervals and next-step recommendations.

This isn't vaporware. Researchers at Anduril Industries (a defense AI company) and Scale AI (which builds training infrastructure) have deployed auto-research agents in production on codebases with millions of lines. Their agents debug complex systems, propose optimizations, and run validation cycles autonomously. The workflow mimics how senior engineers work: gather context, hypothesize, test, and report.

What Infrastructure Powers Agentic Workflows in 2026?

Agent frameworks, enterprise-tuned models, vector databases, and workflow orchestration platforms now stack together into production-grade autonomous systems.

Building an agentic system in 2026 doesn't require custom infrastructure. Five categories of tools have converged:

| Layer | Purpose | Key Tools | Maturity |

|---|---|---|---|

| Agent Frameworks | Multi-step workflow orchestration and loop control | LangChain, LlamaIndex, Anthropic Agents API, LangGraph | Production-Ready |

| Models | Reasoning and decision-making core | Claude 3.5 Sonnet, NVIDIA Nemotron 3 Super, GPT-4o | Enterprise-Grade |

| Knowledge Retrieval | Grounding agents in proprietary data | Pinecone, Weaviate, Milvus | Rapidly Growing (2024–2026) |

| Workflow Glue | Data pipeline and system integration | n8n, Make, Zapier | Stable |

| Observability | Monitoring agent decisions and cost | Langsmith, Arize, Humanloop | Emerging Standard |

The model layer is where 2025–2026 saw the biggest leap. NVIDIA released Nemotron 3 Super, trained on roughly 900 billion tokens of high-quality enterprise and technical data. It's purpose-built for knowledge work: document analysis, code generation, logical reasoning over structured data. Unlike general-purpose models, Nemotron 3 Super excels at the tasks agentic systems need to do repeatedly: read a codebase, understand intent, and generate fixes with minimal hallucination.

Vector databases—Pinecone, Weaviate, and Milvus—have seen explosive adoption since 2024. Enterprise teams now store internal documentation, past research findings, and domain-specific knowledge in vector databases that agents can query instantly. This is the difference between an agent that makes decisions correctly and one that gets lost in hallucination: it's grounded.

Where Are Companies Using Agentic Workflows Today?

Engineering, product/strategy, and security teams are leading adoption—but implementation quality varies wildly from autonomous loops to "AI-washed" automation. For real-world enterprise impact, read how agentic AI is reshaping workplace decisions and organizational workflows.

Engineering: Code Review and Optimization

The most mature deployments are in software engineering. An agent monitors your repository, pulls incoming pull requests, runs tests, measures performance metrics, reads the code changes, and either approves or flags issues with a detailed explanation. At Anduril, agents have evaluated thousands of code submissions, catching edge cases that manual review misses and surfacing performance regressions before they ship.

The economic model is clear: junior engineers spend 15–20% of their time on routine code review. If an agent handles 60–70% of straightforward reviews (style, test coverage, obvious bugs), that junior engineer is freed for architectural decisions and mentoring. The ROI is measurable in weeks.

Product and Strategy: Market Simulation and Roadmap Generation

This is where most "AI-washing" happens. Many companies claim agents are running simulations and generating roadmaps autonomously. In reality, most are running structured templates with minor automation. True auto-research here looks like: agent ingests sales data, customer feedback, competitive intelligence, and market trend data from your warehouse, runs a Monte Carlo simulation of user adoption under three product scenarios, and recommends a prioritized roadmap with predicted confidence intervals.

A handful of AI-native product teams (Scale AI, Anthropic) run this in production. Most enterprises are still in the "we filtered the data and a human still wrote the roadmap" stage. That's honest automation—not agentic autonomy.

Security and Compliance: Anomaly Detection and Incident Response

Security is the newest frontier. Agentic systems now scan logs, identify anomalies via statistical analysis and threat intelligence, and draft incident response playbooks for low-confidence alerts. Human security analysts review and escalate the high-confidence findings. This staged gate prevents false positives from triggering expensive incident response while still catching real threats.

How Can You Spot Real Autonomy vs. AI-Washing?

AI-washing conflates marketing automation with true autonomous loops. Real agents iterate without human gates for 80%+ of workflows; "AI-washed" systems require escalation constantly.

Here's the uncomfortable truth: many enterprises claiming "agentic AI deployments" are actually just running rule-based workflows or simple automation with LLM wrappers. Tech journalists and investor decks have amplified the hype. Media coverage of "AI-washing layoffs" has surfaced the problem: companies rebrand organizational restructuring as AI automation, then lay off the humans who used to do the work—even though the AI isn't actually doing it autonomously yet.

How to spot the difference:

- Real autonomy: The agent runs 80%+ of its intended process without human bottlenecks. It makes decisions, executes them, and reports back.

- AI-washing: The agent suggests an action, waits for human approval, then executes. That's structured automation, not autonomy.

- Red flag: If the company fires half the team and claims the AI does the work but the AI actually can't run unsupervised for more than 30 minutes, that's AI-washing.

Social media (particularly X/Twitter) and tech newsletters have crystallized "AI-washing layoffs" as the term for this phenomenon. It signals growing skepticism in the tech community about whether headline-grabbing "AI transformations" are real or performative.

Nexairi Analysis: The Signal Behind the Noise

Search volume for "agent frameworks" and "vector database" has spiked 200%+ since early 2025. This is a genuine infrastructure shift, not pure hype. But the gap between the infrastructure capability and actual business deployment is enormous. Enterprises have built the tools; they haven't yet redesigned their processes to use them autonomously.

The real risk isn't that agentic AI won't work. It's that mediocre implementations will burn trust and budget, making it harder for genuine autonomous workflows to get funded in 2027 and beyond.

What Must Enterprises Document for AI Governance in 2026?

U.S. AI Executive Order (2024–ongoing) and GSA contractor rules (effective 2026) now mandate audit trails, bias measurement, and human oversight records for autonomous AI decisions.

The regulatory landscape has shifted. The U.S. Executive Order on Safe, Secure, and Trustworthy AI, signed in November 2024, requires federal agencies and government contractors to maintain transparent audit trails for AI decision-making. For deeper governance frameworks, see our analysis of agentic AI data governance and constitutional frameworks. Starting in 2026, the General Services Administration will enforce AI governance clauses in federal contracts, mandating:

- Documentation of data lineage (where did the agent's training data come from?)

- Bias audits and testing for discriminatory outcomes

- Human oversight gates for high-stakes decisions

- Public transparency about when AI agents make decisions

This creates a two-tier market: enterprises with strict compliance needs (defense, finance, healthcare) will architect agents conservatively with human gates and audit trails. Everyone else will move faster but risk regulators catching up. The smart move is to build governance now, not scramble later.

How to Start Building an Agentic Workflow (This Week)

Pick one low-risk, high-ROI workflow. Bootstrap with Claude API and LandChain. Measure cost savings and time freed. Scale if ROI > 30% efficiency gain within four weeks.

Step 1: Identify Your First Workflow

Good candidates:

- Weekly data analysis report: Agent fetches data, runs analysis, writes summary. Human reviews and ships.

- Bug triage: Agent reads new tickets, categorizes by area, suggests priority, routes to team.

- Content QA review: Agent checks blog drafts for consistency, grammar, links. Drafts feedback. Editor finalizes.

- Proposal drafting: Agent reads RFQ, pulls from past proposals, drafts response. Sales team customizes and ships.

Avoid high-stakes first workflows (hiring decisions, financial approvals, customer-facing policy changes). Start where errors are recoverable and human review is already happening.

Step 2: Bootstrap with Existing Tools

You don't need to engineer from scratch. Stack:

- Agent Framework: Anthropic Agents API (if you want official support) or LangChain (if you want flexibility)

- Model: Claude 3.5 Sonnet (general-purpose) or NVIDIA Nemotron 3 Super (if processing enterprise documents)

- Vector DB: Optional—use Pinecone's free tier or Weaviate if you need grounding. Skip if your workflow doesn't require knowledge retrieval.

- Glue: n8n or Make if you need to connect APIs and workflows visually

A minimal agent loop for bug triage can be running in a single afternoon: ingest a ticket via webhook, call the Anthropic API, parse and categorize the response, post back to your ticket system. That's autonomy.

Step 3: Design the Loop

Document:

- Input: Where does the agent get its starting data? (API, file, database query)

- Agent Logic: What does it do? (read, analyze, decide, generate)

- Output: Where does the result go? (report, code, ticket update, email)

- Human Gate: What happens if confidence is low? (escalate, flag for review, require approval)

Start with a low-confidence gate: if the agent's confidence score is below 70%, escalate to a human reviewer instead of executing. As you see patterns and build trust, raise the threshold to 80%, then 85%.

Step 4: Measure and Iterate

Track:

- Cost per run: (API calls + compute) / successful task runs

- Human time freed: (time per task normally) - (review time for agent output)

- Accuracy: (tasks agent got right) / (total tasks attempted)

- Escalation rate: What % hit the human gate?

If after four weeks you've freed 30% of human time for that workflow and the cost per run is <$0.50, you've got ROI. That's your signal to expand to a second workflow.

Why Should You Start Building Agentic Workflows Now?

Agentic autonomy is real, measurable, and accelerating. Most enterprises are still in the hype phase, but the gap between capability and deployment is closing fast.

The race is on. Competitors who move slowly will lose engineering hours, product insight velocity, and operational agility to teams architecting autonomous loops today. The infrastructure is available. The ROI is provable. The regulatory framework is clarifying. What's left is organizational will.

Your next move: pick one workflow this week. Sketch the loop. Get it working over Friday. Measure results. Then decide whether 2026 is the year you build or the year you get automated out of existence.

Sources

- Anthropic Documentation - Agent API and Multi-Turn Reasoning

- NVIDIA Technical Documentation - Nemotron 3 Super Model Training and Performance

- U.S. Executive Order on Safe, Secure, and Trustworthy AI - Federal Government and Contractor AI Governance Requirements

- Andrej Karpathy Published Research - Agent Evaluation Loops and Autonomous System Architecture

- Scale AI and Anduril Industries Case Studies - Production Agentic Workflow Deployments (2025–2026)

Fact-checked by Jim Smart