Key Takeaways

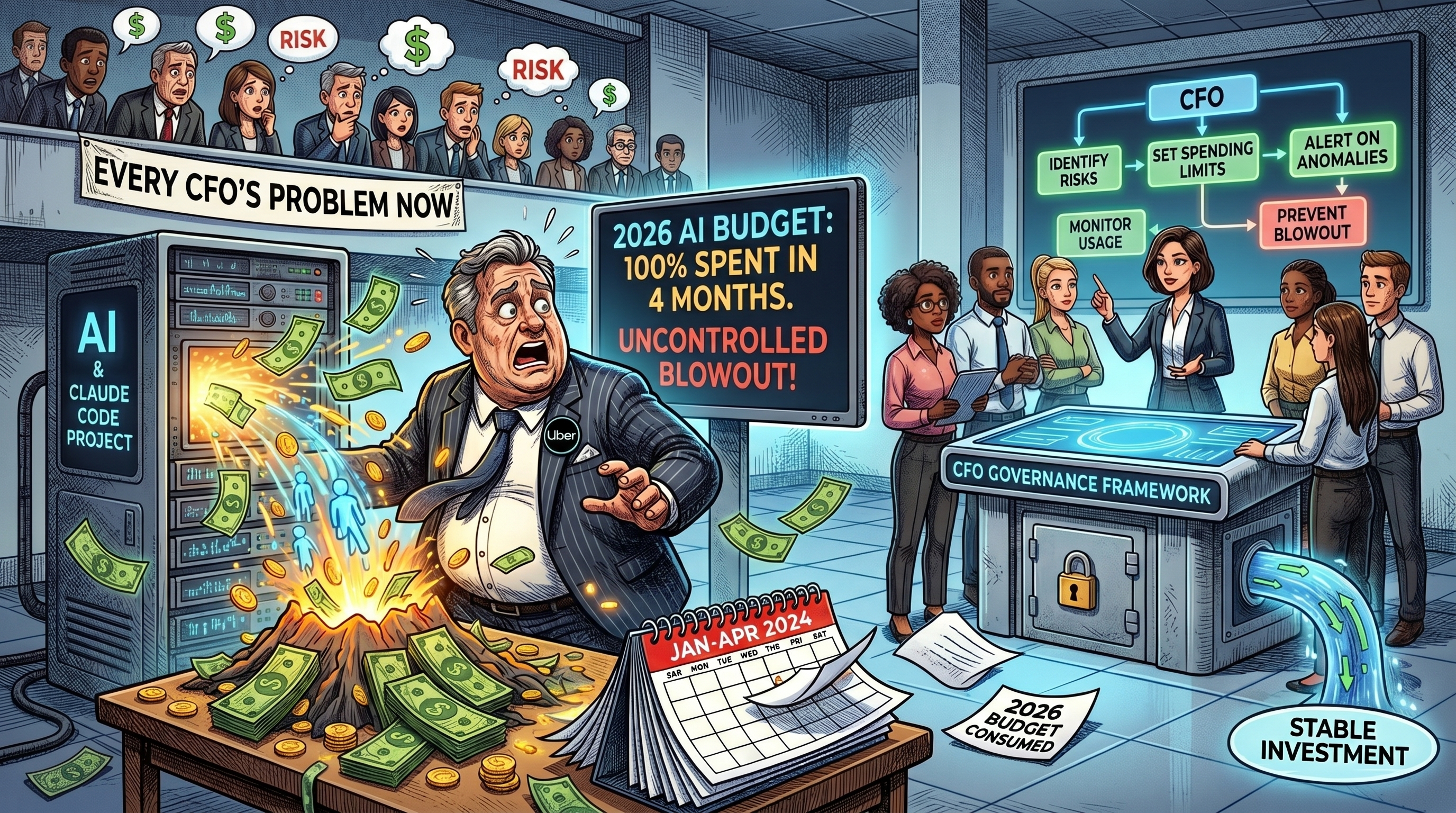

- Reports indicate Uber exhausted its full-year 2026 AI budget within four months after uncontrolled enterprise use of Anthropic's Claude Code developer tool.

- KPMG laid off approximately 400 U.S. advisory staff in early 2026, with company statements citing AI-driven productivity improvements in the advisory practice.

- Gartner projected in early 2026 that AI could unlock 10 margin points for CFOs by 2029 — but only with disciplined spend governance, not open-ended AI tool deployment.

- The gap between AI's margin potential and AI's budget risk is a governance problem, not a technology problem. CFOs need spending frameworks before engineers and developers self-deploy at scale.

What happened at Uber, and why does it matter to finance teams?

Reports say Uber burned through its entire 2026 AI budget in four months after engineers deployed Anthropic's Claude Code without enterprise spend controls in place.

The specifics are still emerging. What's clear is the structural picture: a large engineering organization started using a consumption-priced AI tool at scale, and the bill outran the budget before finance leadership could react. The tool worked fine. That's not the point. "Working" and "governed" aren't the same thing.

If this sounds unlikely at your organization, it's worth checking your current AI procurement policy — specifically whether it includes per-team spend caps on AI subscriptions and API usage.

What's the actual scale of AI spending risk in enterprise finance?

Enterprise AI spending is growing faster than most finance teams have governance frameworks to track — and usage-based pricing means there's no natural ceiling on cost.

The problem shows up in a few distinct ways:

Shadow AI procurement — individual teams and departments purchase AI tool subscriptions outside formal IT and finance review. Copilot licenses, API access, model subscriptions and specialized AI tools can accumulate across hundreds of cost centers with no central visibility.

Usage-based pricing without caps — many AI tools price on consumption: tokens processed, queries run, compute time used. A team that scales usage dramatically can generate a bill that looks nothing like the original budget line. Unlike a SaaS seat license, there's no natural ceiling unless you set one.

Developer tools with enterprise reach — tools like Claude Code, GitHub Copilot and Cursor are built for individual developers but deployed at enterprise scale. When thousands of engineers each use a consumption-based tool heavily, the aggregate spend can exceed the AI budget before finance notices.

| Risk Type | How It Grows | Governance Response |

|---|---|---|

| Shadow AI subscriptions | Teams buy tools outside IT/finance review; appear as miscellaneous SaaS costs | Mandate AI tool disclosure; create an approved vendor list with procurement pre-clearance |

| Usage-based API overages | Consumption scales faster than projected; no cap triggers before budget is exceeded | Set hard spend limits on API contracts; require usage alerts at 50% and 80% of budget |

| Developer tool proliferation | Individual developer licenses multiply across engineering; no aggregate budget owner | Consolidate AI developer tools under one budget owner; require per-team monthly reporting |

| Vendor lock-in via data dependency | AI tools accumulate institutional memory and context; switching costs grow silently | Include data portability requirements in AI vendor contracts; annual vendor review cycle |

| Productivity claims without ROI evidence | AI budgets approved based on projected savings that are never formally measured | Require measurable ROI criteria before AI tool renewals; pair AI spend with productivity tracking |

What does KPMG's advisory layoff signal about where this is heading?

KPMG's 400 U.S. advisory layoffs in early 2026, tied to AI productivity gains, suggest the profession has moved from AI experimentation to AI-driven headcount reduction.

For most of the past two years, AI tools landed alongside existing staff — additive rather than subtractive. KPMG's move suggests that's changing. The firm cited improved AI-driven productivity in its advisory practice, meaning the efficiency gains were real enough to translate into fewer headcount, not just faster delivery.

Microsoft made a similar point in early 2026 when its CFO connected a round of employee reductions to AI productivity gains. When two of the most AI-invested organizations in the world start making that connection in public statements, finance teams elsewhere need to think through where that pressure lands next.

What is Gartner's CFO margin case for AI?

Gartner projected in early 2026 that AI could unlock 10 margin points for CFOs by 2029 — contingent on disciplined spend governance, not open-ended AI tool deployment.

That number shows up in a lot of AI vendor presentations. It's worth understanding what it actually requires.

Ten margin points is a transformational number for most businesses. Moving operating margin from, say, 18% to 28% is not an incremental improvement. It implies a fundamental shift in how work gets done — significant automation of processes that currently require substantial human labor, alongside the governance and operational discipline to prevent AI spending itself from eroding the gains.

The unstated condition in any projection like that is that AI spending has to be governed well enough not to eat the margin improvement from the other side. A team that saves $2 million in labor costs through AI automation but spends $1.8 million on ungoverned AI tool subscriptions has not achieved a 10-margin-point improvement.

What framework should CFOs apply to AI spending now?

The Uber scenario is a governance failure — AI deployed without spend caps, budget owners or ROI criteria that any other major cost category would require.

A working framework for enterprise finance teams has four parts:

Centralized visibility — every AI tool, subscription and API contract in the organization should be visible to finance. IT and procurement share the obligation to flag AI-related spending regardless of how it's categorized in the general ledger.

Hard consumption caps — any AI tool with usage-based pricing needs a hard spending cap and an alert at 70–80% of that cap. This gives finance time to respond before the budget is gone. It's basic contract management applied to a new cost category.

ROI criteria tied to renewals — AI tool renewals should require the requesting team to show evidence of the productivity or revenue impact that justified the original purchase. No evidence, no automatic renewal.

A single budget owner — distributed AI spending with no accountable owner is what made the Uber scenario possible. Someone — a Chief AI Officer, the CFO's office, a dedicated steering committee — needs to own the consolidated AI budget and have authority to pause or cap spending when it runs ahead of plan.

For firms thinking through how to evaluate and govern their AI investment strategy, see our analysis of how AI spending is now a going-concern risk factor that auditors are beginning to flag. And for the operations side — where AI agents can actually drive the margin improvements Gartner is projecting — our piece on 10 finance workflows AI can cut in half breaks down where the real time savings are.

The real risk isn't that AI is too expensive — it's that no one owns the number

The Uber situation, if the reporting is accurate, isn't primarily a story about AI being costly. Claude Code, by enterprise software standards, isn't an unusually expensive tool. The story is about what happens when consumption-based pricing meets a large engineering organization with no spend governor in place.

That condition exists at far more companies than Uber. Most enterprise finance teams have never had to govern usage-based AI tool spending at scale before this year. The procurement frameworks, the approval workflows and the budget category structures were designed before AI tools had per-token pricing models and before a single team could accidentally run up a budget line in a month.

KPMG's layoffs and Microsoft's productivity framing suggest the efficiency case for AI is real — the margin is there if you capture it. But the companies that capture it will be the ones that governed AI spending the same way they governed every other major cost category: with a budget, an owner, a cap and a measurement framework. The ones that didn't will look more like the Uber story.